Solar design software has a funny relationship with geometry. Everyone wants the roof model to be exact, but the earliest version of a project often starts with very little: an address, maybe an energy estimate, a few aerial images, and a customer who expects a proposal before the conversation gets cold.

The interesting product problem is not “can we make a perfect roof model?” It is “can we make a roof model that is useful enough to support design decisions, while clearly knowing where it might be wrong?”

That distinction changes the whole system.

The hard part

For a solar proposal workflow like LightFusion, the roof model sits in the middle of several downstream jobs:

- panel placement needs planes, edges, setbacks, and usable area

- shading analysis needs geometry that behaves well under sun-position sampling

- production estimates need repeatable surfaces and orientations

- AR and 3D review need something that looks understandable to a human

- editing tools need stable objects that can survive user corrections

The first version of this kind of pipeline usually tries to produce one clean mesh and send it everywhere. That works in demos. It breaks in production.

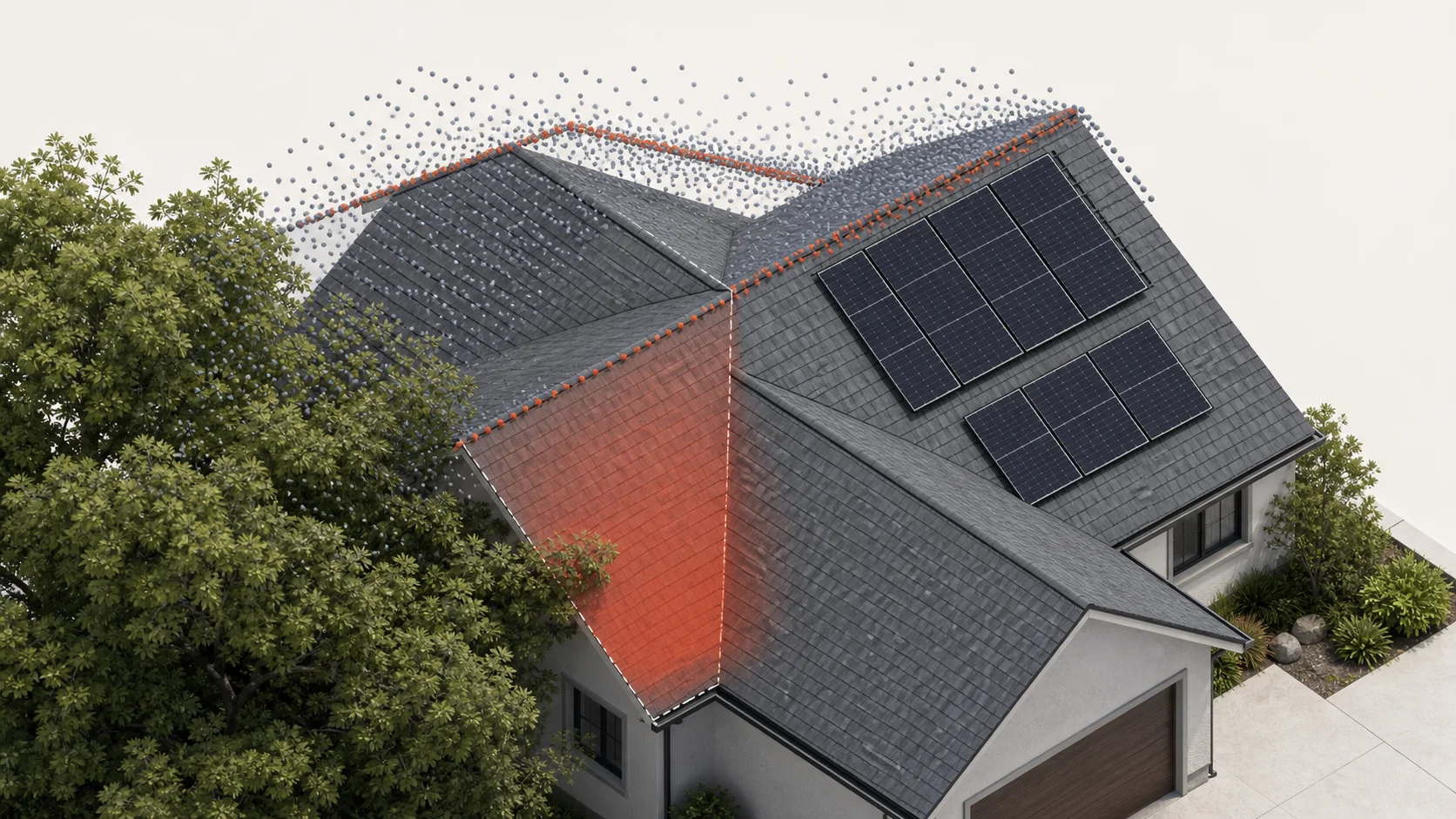

Roofs are messy. Imagery can be stale. Trees hide edges. LiDAR is sometimes sparse, sometimes offset, sometimes absent. Dormers, hips, vents, and parapets create little contradictions. A model may be visually plausible but numerically awkward, or numerically consistent but confusing in 3D.

If every part of the product treats the generated roof as ground truth, small mistakes become expensive. A slightly wrong ridge can shift panel layout. A noisy surface can create fake shade. A pretty 3D model can hide a bad azimuth. One error becomes five different user-facing problems.

The useful abstraction

The better abstraction is to treat generated geometry as a set of hypotheses, not a single answer.

Instead of producing only a mesh, the pipeline can produce a small structured scene:

- roof planes with confidence scores

- edges with source evidence

- detected obstructions and low-confidence regions

- normalized orientation and pitch estimates

- a lightweight visual mesh derived from the analytical model

- edit operations that preserve semantic objects

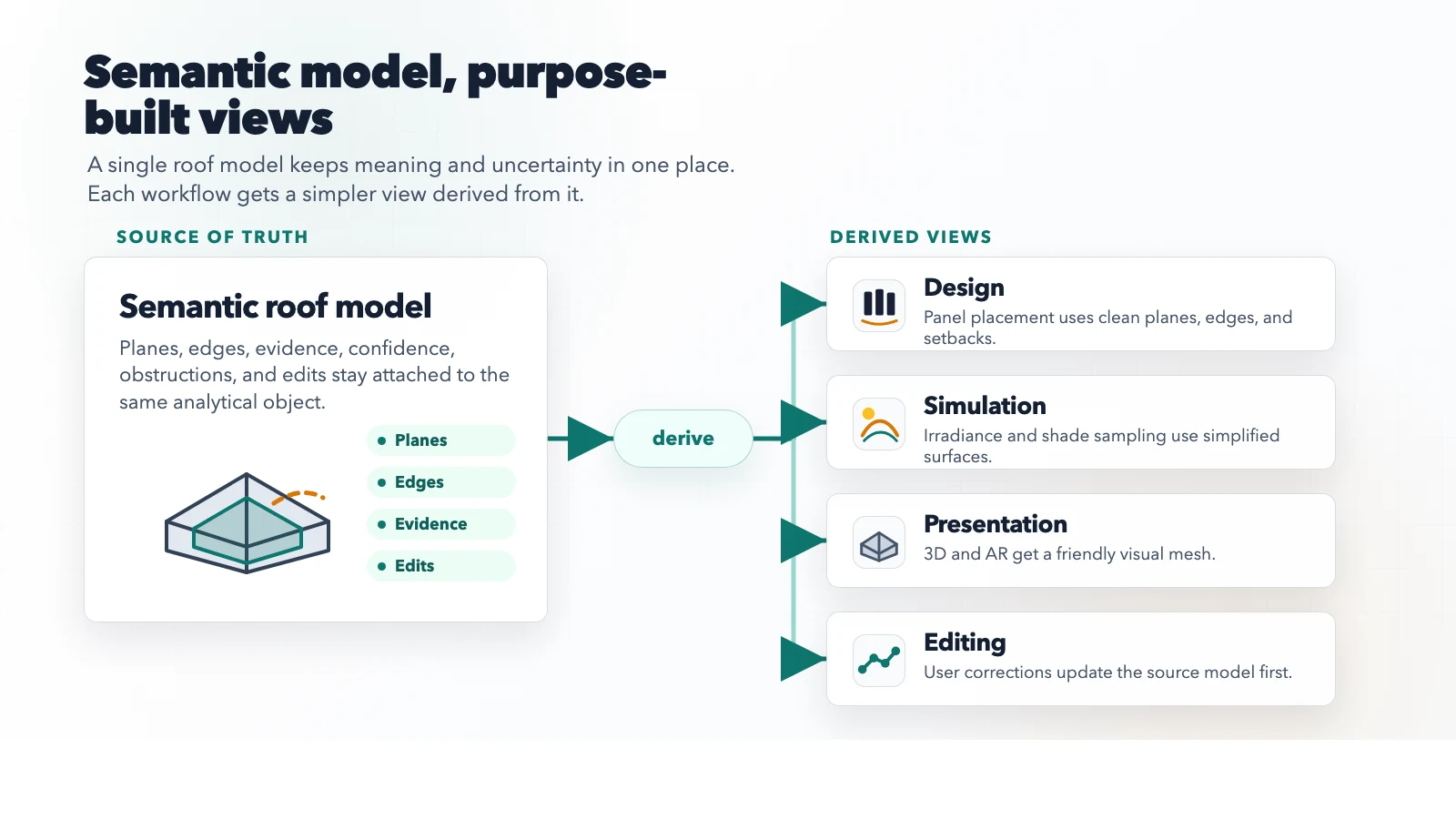

That lets each downstream system use the level of truth it actually needs.

The proposal renderer can use the friendly visual mesh. The irradiance engine can use simplified planes. The editor can expose roof faces and edges as objects instead of triangle soup. The layout optimizer can avoid low-confidence regions until a user confirms them.

The key is boring, but important: the system stops pretending that one representation should satisfy every consumer.

How I would solve it

I would split the pipeline into three stages.

First, build a conservative roof skeleton. Detect the large planes, ridges, hips, valleys, and obvious boundaries. Do not chase every detail immediately. The output should be stable, editable, and easy to explain. If the model is unsure about a section, mark it as uncertain instead of forcing precision.

Second, run a constraint pass. Adjacent planes should meet cleanly. Edges should snap only when the evidence is strong enough. Tiny surfaces should be merged unless they matter for layout or shading. Pitch, azimuth, and area should be recalculated from the cleaned analytical model, not from whatever triangles happened to survive detection.

Third, generate separate views from the same source of truth:

- a design view for panel layout

- a simulation view for shading and irradiance

- a presentation view for 3D and AR

- an edit view for human corrections

Each view can simplify the geometry differently, but all of them trace back to the same semantic roof model. When a user drags an edge, deletes a false obstruction, or splits a plane, the analytical model changes first and the derived views update after that.

Why this matters

This is the kind of architecture that makes an AI-assisted workflow feel calm instead of magical in a brittle way.

When the model is right, the user gets a proposal quickly. When the model is partially wrong, the product still has a graceful path: show uncertainty, keep the objects editable, and let corrections improve the rest of the workflow. The software does not need to act embarrassed by imperfect data. It just needs to make the imperfection useful.

The best version of this system would make the first generated design feel fast, but the second human-adjusted design feel trustworthy. That is where AI in solar design becomes more than automation. It becomes a partner in the parts of the job that used to be slow, repetitive, and oddly fragile.

The roof does not have to be perfect at minute one. It has to be honest, structured, and ready to get better.